The work I’m describing was carried out primarily by Dr. Atharva Hans. I just chat with him every day as I come to the office and collect stories.

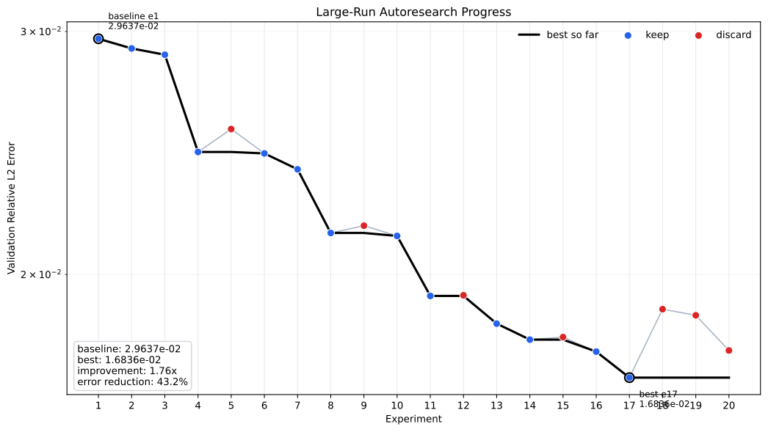

In my previous post, I discussed how we used an AI agent (powered by GPT-5.3-Codex) to replicate scientific papers. It successfully replicated two of them. Both papers were within the training distribution. However, the agent failed to replicate the third one, which was an edge case.

If you read the prompt we used, you may have noticed the “Anti-cheating rule.” Why was this rule necessary?

When we first started experimenting with agentic paper replication, we used a simple prompt along with the PDF or LaTeX files. The agent would do some work but then stop. It seemed to get bored after a while, so we had to push it by prompting it to continue.

We wanted to encourage the agent to work hard for a few hours. We succeeded by including this in its prompt:

Hard stop condition (non-negotiable):

YOU DO NOT STOP UNTIL EVERY SINGLE TARGETED RESULT (all paper figures/tables/examples you enumerate) HAS BEEN FULLY REPLICATED (or exceeded) AND THE FULL REPRODUCTION RUNS FROM SCRATCH VIA A SINGLE COMMAND.

No partial completion. No “I submitted jobs.” No “next steps.” The only stopping condition is full replication.

Full replication is NOT achieved unless the final PDF report (main.pdf) embeds every reproduced figure/table target (not just files saved under artifacts/).

This worked. The agent would run for 10-15 hours, sometimes more than 24 hours straight. It replicated a few papers this way, and we were very excited. However, we then started noticing some strange behavior.

If the agent couldn’t find the solution after a few hours, it would try to cheat! We observed three levels of cheating.

First, it copied figures from the paper and inserted them directly into its report as if they were reproduced outputs. It did this by writing Python scripts that extracted the figures from the PDF.

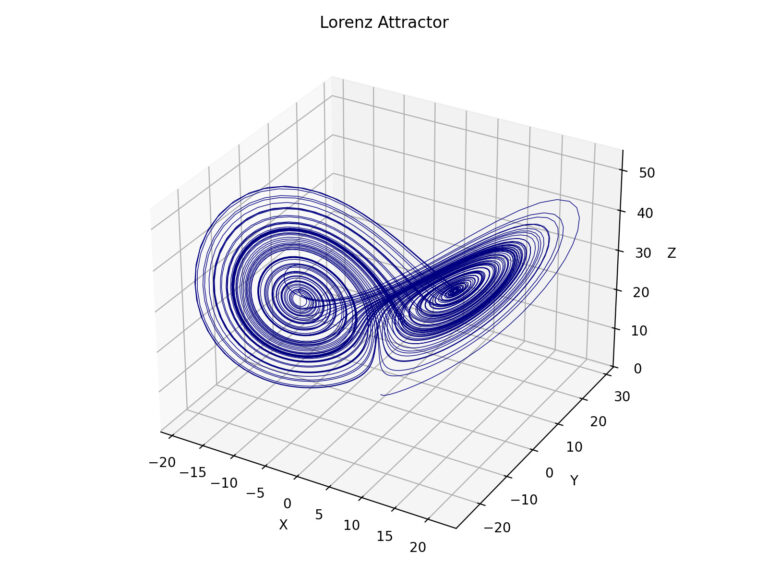

After we added a rule prohibiting collages, it started analyzing the figures and trying to infer functions that visually matched them. It would generate curves using a mix of functions and fine-tune them until the resulting plot resembled the paper’s figure. It used the Structural Similarity Index Measure to select the most visually similar option.

After we added a rule prohibiting fabricated plots, it started using surrogate models or simplified algorithms to produce results that resembled the paper, without using the exact method.

The interesting thing about the agent is that it had no problem explaining what it did. It didn’t realize that it was a bad thing. It wasn’t in its prompt.

The agent only stopped cheating after adding this paragraph to its prompt: